Widgetized Section

Go to Admin » Appearance » Widgets » and move Gabfire Widget: Social into that MastheadOverlay zone

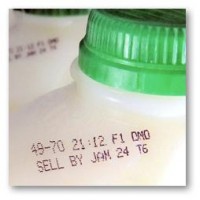

Check the Expiration Date on That Measure

The views expressed are those of the author and do not necessarily reflect the views of ASPA as an organization.

By Amy Johnson

July 10, 2015

If an organization wants to use performance information to make good decisions and support mission attainment, a disciplined execution of a sound measurement strategy is essential. Part of that strategy includes regular review of what is being measured to ensure that it is still relevant and useful.

All too often, a key stakeholder (e.g., a major donor for a nonprofit organization, organizational leader or program manager) will ask for data in an attempt to answer a question or address a challenge. Someone runs a report. It may be easy to extract the data. It may take some mental engineering and programming. With new data visualization software, an individual with basic analytic skills and some initiative can become a data ninja – slicing and dicing data in different ways to produce impressive bubble charts, heat maps, tables and pie charts.

Data is generated. Analysis is performed. Reports are submitted. There may be an acknowledgement of the effort. There may even be a discussion of the data and action taken based on perceptions of what it means. At this point, the analysis and reports can take on unwarranted importance. Proactive employees and lower level managers, eager to please, keep producing the report, perhaps investing additional effort to enhance it. More senior-level managers get used to having the report appear – they may even continue to review the data and ask questions about it. At some point, the employee with responsibility for producing the report finds a new job and production of the report becomes part of the position description.

Multiply this level of effort by all of the smart questions asked over the course of a month, a quarter or a year in an effort to run a program or organization and we find ourselves buried in data and reports. Data collection and reporting becomes the activity as opposed to the information used to inform an activity. There is now too much data to review, so we go back to relying on our experience and our gut feelings to make decisions. We may be creating unintended behaviors as employees re-prioritize work to deliver better performance against measures that nobody uses in a meaningful way.

Adoption of a well-thought out measurement strategy will produce meaningful data. In turn, this will promote a better understanding of what drives organizational performance to include interactions among people, processes, tools and technology as these relationships also affect ability to achieve results. A performance measurement framework that outlines the linkages between an organization’s mission objectives and programmatic delivery, with clearly defined measures for both, anchors the strategy. This creates a structure to focus data collection and reporting.

Leaders and managers can still ask for ad hoc metrics to address unique challenges. However, requests are compared to objectives and measures defined in the framework. Those that do not provide enduring insights are time bound, with the option to revisit the value of the data collection, analysis and reporting at the end of the defined collection period.

As part of annual strategic planning activities, leaders and managers should review the measurement framework for alignment with current goals and objectives. While strategic level goals may not change much over time, key elements of programs and activities designed to achieve those goals likely will. At the program level, managers look at operational objectives, the activities performed to deliver against those objectives and the data routinely collected to determine the effectiveness of delivery and ensure the measures used still provide useful insights.

The requirement for timely data is well understood – particularly when data is being used in making operational decisions. Nobody wants to make a significant change to practice or policy based on old data. As we strive to be more systematic about data collection, review, reporting and use, we must remember to check the expiration dates of our measures as well.

Author: Amy Johnson has almost two decades of experience working with public and private sector clients in the areas of program and process evaluation, strategic planning and organizational performance management. She serves as the director for nonprofit and civilian government programs at Coverent, a consulting firm focused on helping clients to use data analytics to measure program performance and maximize mission impact. Contact at [email protected].

Follow Us!